There is a large number of AI solutions that simplify the daily routine for experts in different fields. For example, they can use chatGPT to generate emails and create summaries from texts. Experts also employ DALL-E since, with the help of a few prompt commands, they can generate relevant images for their work needs. Engineers can train models to generate specific output based on custom documents or images.

In this article, our data science team lead and a Ph.D. Volodymyr Andrushchak and senior data science engineer Oleksandr Kondakov will explain the use of GAN, LLMs (large language models), and embedding models to address content generation based on custom data (documents, FAQ archives, support, etc.). Check out our generative AI use cases developed by the Lemberg Solutions data science team.

What is generative AI?

Generative Artificial Intelligence, Generative AI or GenAI, is a general-purpose technology designed to generate specific outputs based on training datasets. In simpler terms, it's the creative engine of AI. Unlike traditional AI, which follows pre-set rules and patterns, generative AI can create original content, whether it's text, images, or music. You can train generative AI on the data from a specific domain. This training equips GenAI with the knowledge needed to generate content that fits within that domain's parameters.

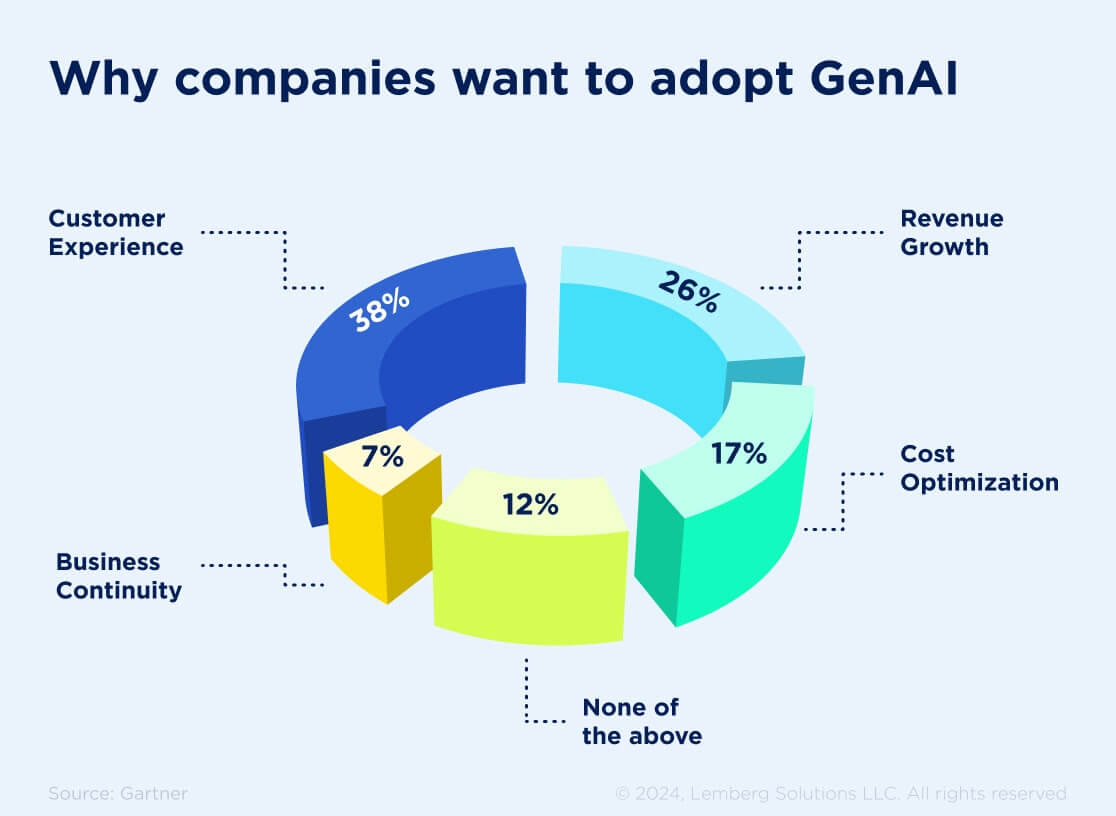

Generative AI initiatives revolve around customer experience, revenue growth, cost optimization, and business continuity. Read more to know what types of generative AI businesses require to reach all these goals.

Generative AI techniques

To make the generative AI solution work, data science engineers apply different techniques, including GANs, LLMs, VAEs, and transformers. Keep reading to learn more about them.

Generative adversarial networks (GANs)

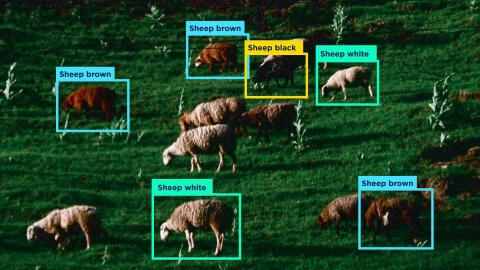

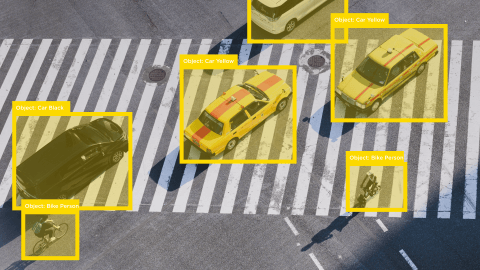

Generative adversarial networks (GANs) are a distinguished class of AI algorithms within the field of unsupervised machine learning. GANs comprise a generator responsible for creating images and a discriminator that determines the difference between generated and real images. The combination of generator and discriminator allows creating images similar to the real ones.

GANs show efficiency in many domains, including healthcare and art. In the case of the healthcare industry, GANs help to transform MRI images into CT scans.

GANs can be applied in various cases, including image synthesis, classification, and image super-resolution. They play a pivotal role in generating authentic human faces and specific data for neural network training.

Large language models (LLMs)

Large language models (LLMs) are deep learning models based on the transformer architecture. This architecture allows LLMs to process text with an understanding of its context and maintain self-learning. LLM models have proved to be effective in generating high-quality text.

LLMs offer data indexing, which helps to streamline search processes and increase the efficiency of document processing and analysis. They also can understand complex sentences and accurately interpret user intent. LLMs analyze syntax, semantics, and overall context to achieve the utmost output accuracy. The search process using LLM improves the user experience since the results are the most relevant to the user’s request. Moreover, LLMs continuously evolve and improve themselves with each new data integration. Continuous learning improves the model’s search process and responses.

Variational autoencoders (VAEs)

Variational autoencoders are neural network architectures that can effectively work with high-dimensional datasets (where the number of features is larger than the number of data points). VAEs’ architecture includes an encoder and a decoder. VAEs can create new information similar to the patterns within the training data, which makes these generative models a great tool for generative AI implementation. VAEs offer an adaptable framework for working with complex datasets. This framework helps generate data that mirrors the training set.

Transformers

Transformers have been paramount for the development of GPT-3 and GPT-4 LLMs. Transformers span human-like text generation, accurate answers to questions, and language translation. The Transformer model brought forth a few innovations. The most important one is the self-attention mechanism, a game-changing feature enabling the model to assess the relevance of each word in relation to others during output generation. This mechanism enhances the model's capability to manage long-range dependencies in text better than its predecessors. Another innovation is positional encoding, which helps the model realize the position of words within a sentence.

Our data science team also applies all these techniques to achieve the best results for generative AI solutions. Read below to learn more about our projects.

4 Generative AI use cases Lemberg Solutions' data science team has implemented

Data science engineers at Lemberg Solutions couldn’t help but join the development of generative AI solutions. We’ve created the following: document “smart search,” email classification, FAQ chatbot, and classification of queries into classes and subclasses. Read below to learn more about the technical peculiarities and perspectives of our generative AI use cases.

1. Smart search in documents

The document smart search solution helps find specific information based on a custom dataset (e.g., information about company policies, legal documents, contracts, etc., especially when it comes to large data volumes).

Our data science team created an algorithm that conducts the search contextually rather than relying on keywords. For instance, if you have a document about information regulation security in the company, you can use it to train a large language model (LLM) and ask questions like: "Can I bring my own laptop to the office and use it for work projects?" The model tries to understand the context of the document and provide an accurate answer based on the given question. This solution helps find an answer considering the context and keywords relevant to the user’s request. We employed the ChatGPT model as an LLM trained exclusively on custom data context and providing contextual prompt/answer responses.

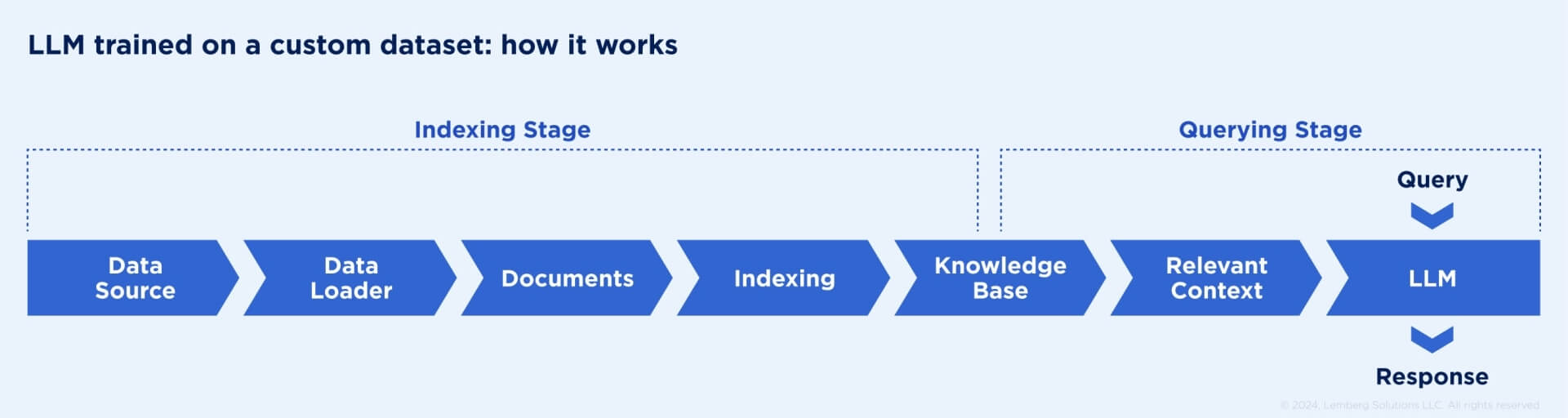

This is how the model works:

We’ve implemented the smart search project by using the Llama index model and OpenAl's model. The Llama model is specifically designed to work with a custom knowledge base, while OpenAI’s model helps to index the dataset. Our algorithm uses Llama index as a query engine (single question & answer), but we can upgrade it to a chat engine (keeping conversation history). The model indexes private data, which creates a searchable knowledge base within your field of interest. If you have any concerns about security, our data science team can create a smart search solution that will run an LLM model within your local server instead of an OpenAI environment.

Below, you will see examples of model performance based on the project development case study for MonoLets extracted from our website.

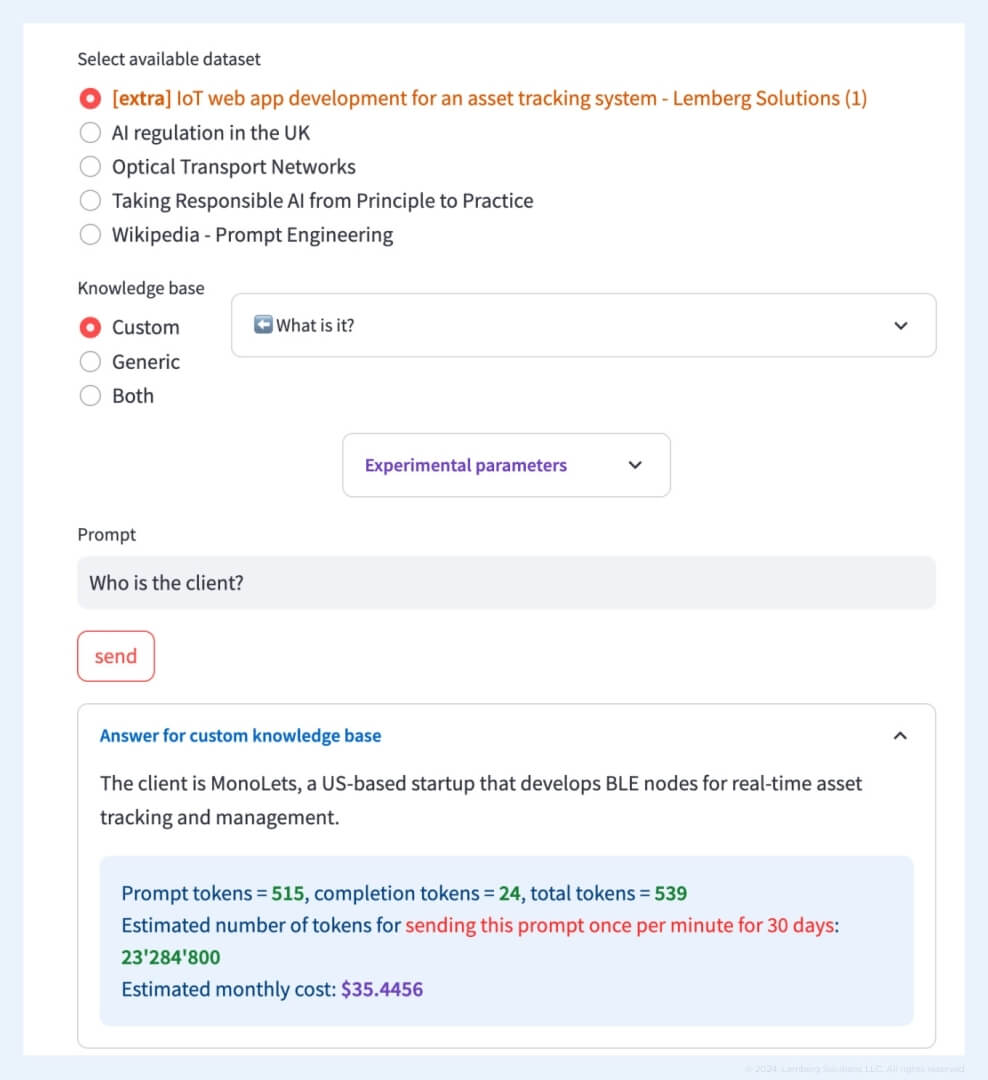

If you choose a “custom” knowledge base, meaning that ChatGPT applies the info from a custom dataset only, the output is the following:

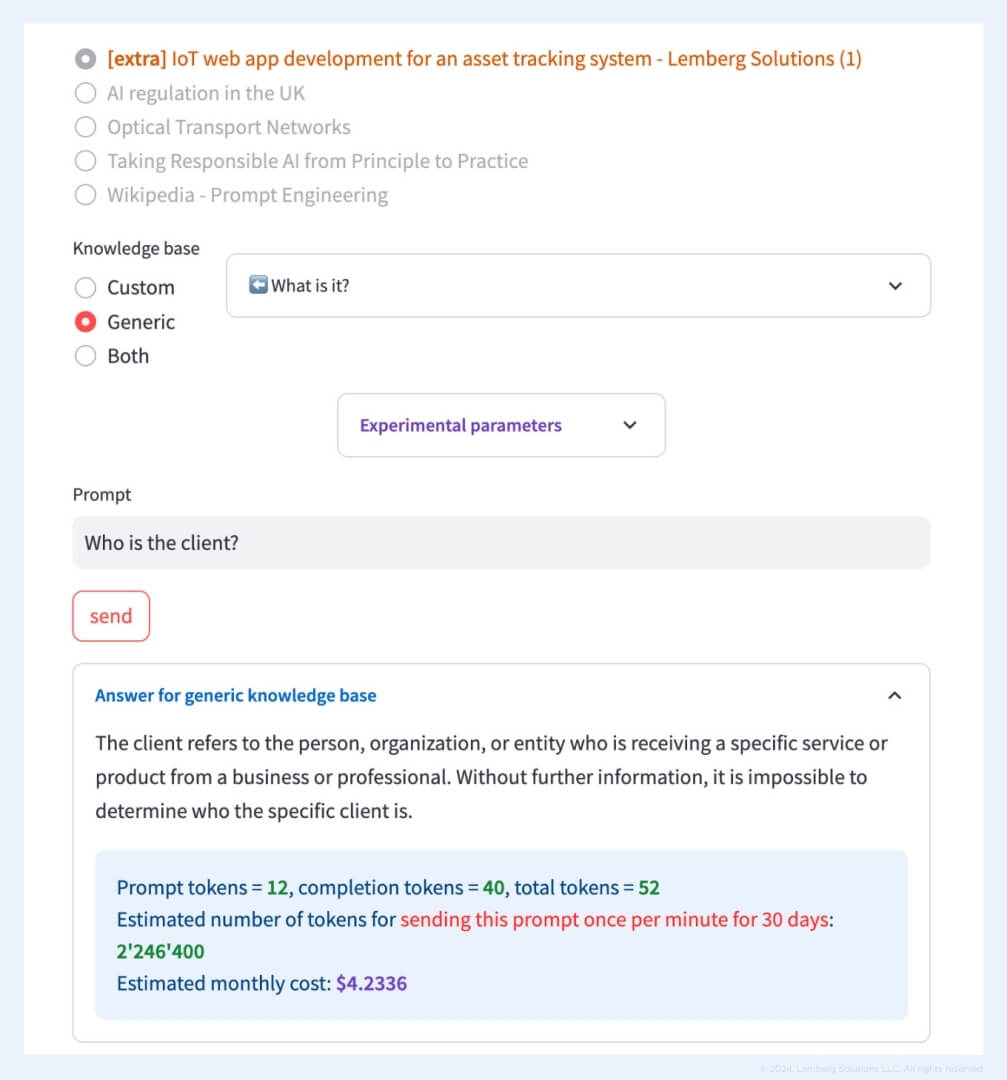

If you choose a “generic” knowledge base, meaning that ChatGPT uses its original knowledge without basing its answer on a custom dataset, the output is the following:

2. Email classification

With numerous emails you receive every day, you can sort them into folders and rely on software configurations that protect you from spam and other issues. However, by using AI tools that understand context, you can achieve more than order in your letters and data protection. For instance, lawyers can benefit from email classification based on the area of law, urgency, emotional tone, etc. This way, users can automatically prioritize their work.

In our demo app, data science engineers used an open dataset consisting of 20,000 emails with 20 classes and subclasses. This solution is an example of single-class classification, which can be applied for anomaly detection (identifying unusual patterns in text data), sentiment analysis (determining the sentiment expressed in text, whether it is positive, negative, or neutral), intent recognition (identifying the intent behind a user's message), and event detection (detecting specific events or activities mentioned in text).

For our project, our AI experts employed the TF-IDF algorithm to determine the "impact" of each word in the document. To classify emails into classes and subclasses, we used a Naive Bayes model.

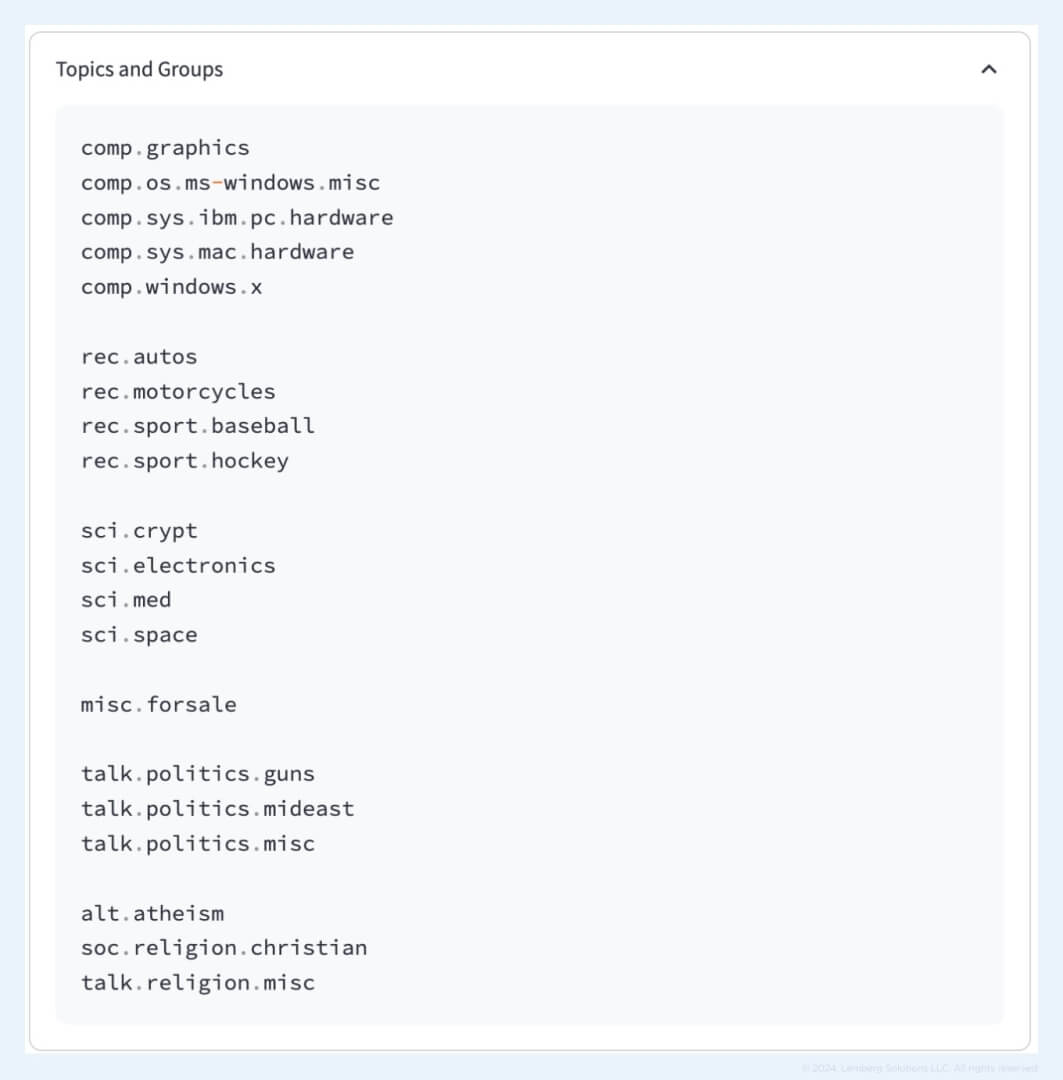

As you can see in the example below, our data science team grouped closely related topics into 6 high-level groups:

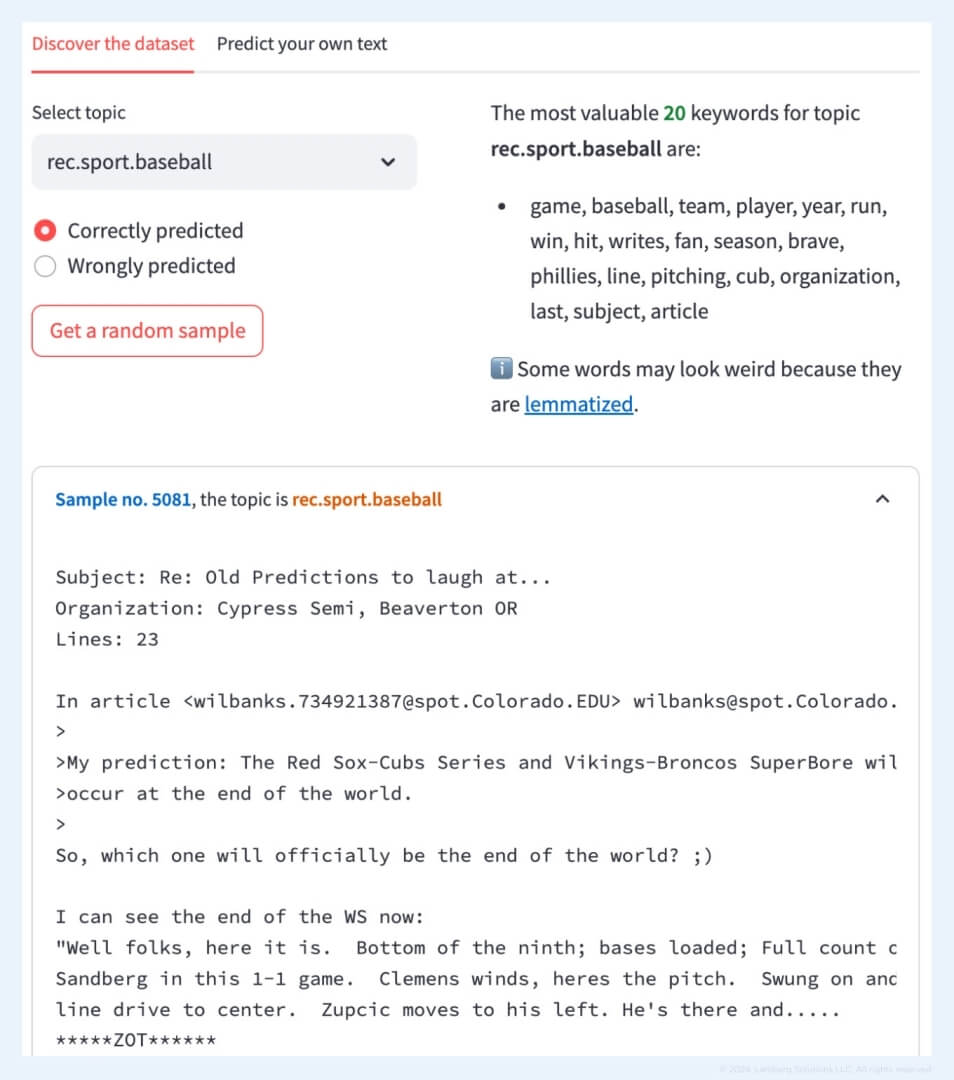

If you choose the topic “baseball,” the model offers the following keywords. The screenshot also demonstrates the email sample on which our data science team trained the model.

3. FAQ chatbot

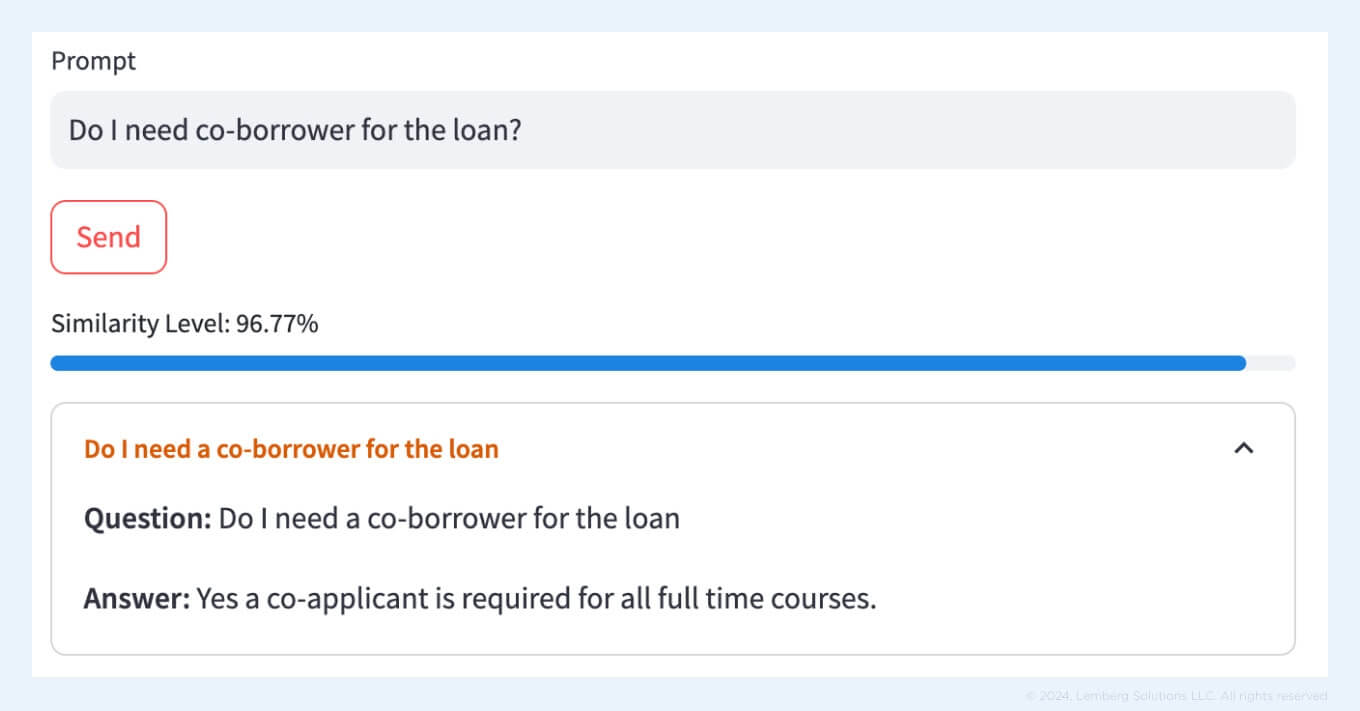

Another solution that can help you build effective and fast interaction with customers is the FAQ chatbot. Modern AI algorithms can better understand individual contexts and provide personalized responses, especially if data scientists leverage embedding models. For this solution, in addition to the OpenAI embedding model, we used the Faiss model to ensure that the system effectively formulates responses to prompt questions.

We applied two variants of vectorizers:

- The OpenAI vectorizer allows for a high-quality identification of "similarity" between the question and answer.

- TF-IDF algorithm (as in the email classification case)

NLP FAQ bot will come in handy when the number of questions and answers significantly increases, and you need a solution that won’t lose its effectiveness and speed.

Here is an example of how our FAQ chatbot provides legal assistance based on the information from a custom dataset:

There are several advantages of using an automated FAQ bot:

- Improved customer service

An FAQ bot immediately generates accurate responses to user queries, ensuring quick issue resolution. This leads to increased customer satisfaction levels and improved service quality.

- 24/7 support

Unlike customer support representatives who have limited working hours, an FAQ bot is available round the clock. Customers can receive help anytime, regardless of the time zone or working hours.

- Scalability and cost-efficiency

The bot can handle multiple customer queries simultaneously without any delay. Such scalability eliminates the need to hire and train additional staff.

- Increased productivity

While the bot addresses repetitive queries, your employees can focus on more complex and high-value tasks. This increases their productivity, ensuring they provide enhanced assistance to users when their expertise is required.

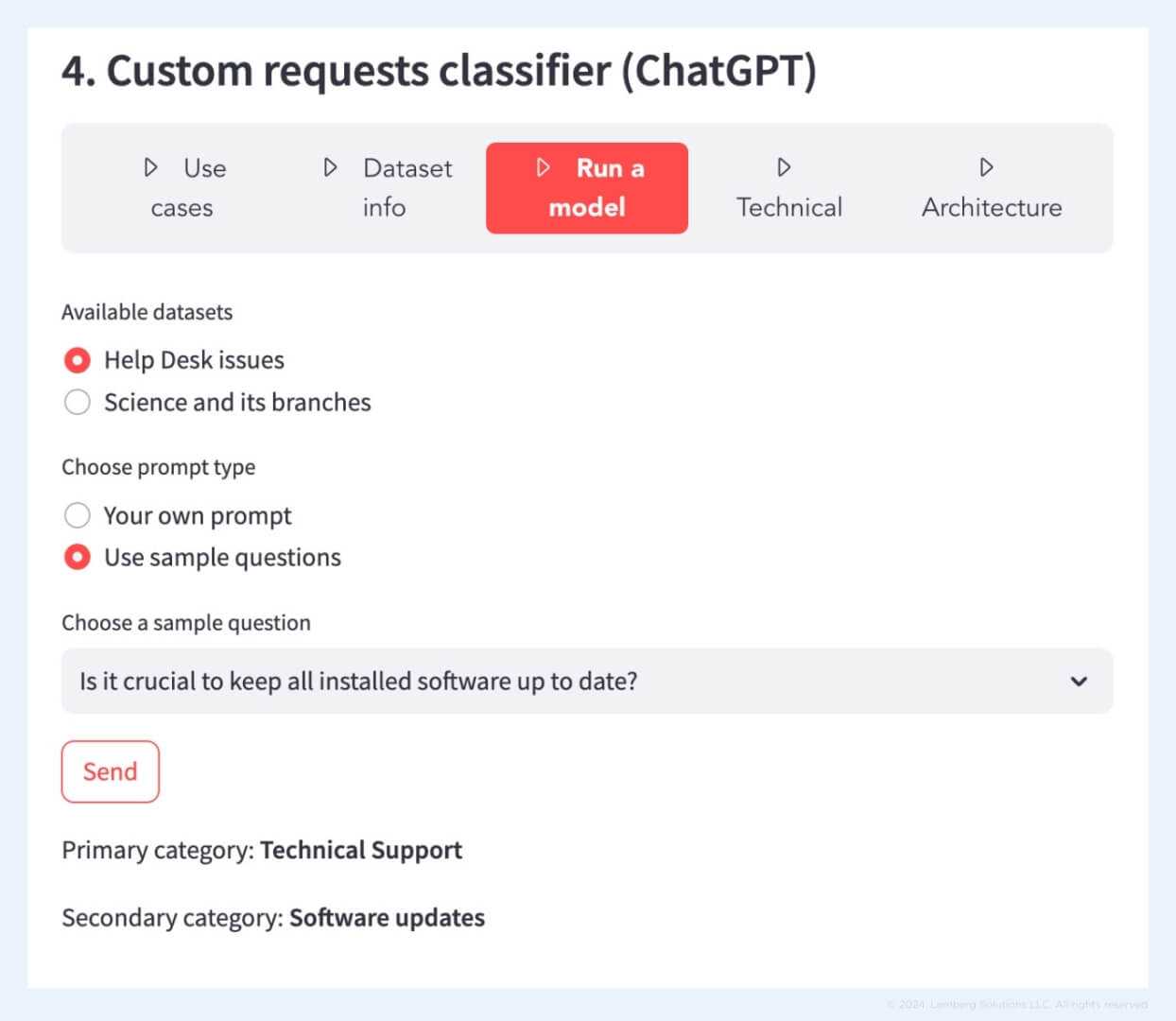

4. Classification of queries into classes and subclasses

Our data science team worked on the software solution that automatically tags a query and classifies it to the relevant category. After that, the query can be directed to a proper department for further processing. This allows you to process more queries in the same amount of time. For this type of classification, we leveraged the chatGPT model.

The screenshot below shows how the model trained on our dataset about company security policy responds to prompts. The algorithm provides the primary and secondary categories based on a question it receives.

Did you know that you can test the projects yourself if you need a similar solution for your project? Contact our experts, and they will help you choose the best solution depending on your project needs. Using these AI examples in real life will ensure effective software automation for your business needs.

Generative AI examples in different industries

Generative AI examples encompass various industries, including healthcare, automotive, energy sector, insurance, agriculture, and finance. Here is how the companies leverage innovative technologies today:

Healthcare

Generative AI can help healthcare professionals with their administrative tasks. With GenAI integrated into specific medical software, physicians can request document summaries and patient information that will be provided immediately. This way, medical workers receive more time to focus on patient treatment and diagnostics.

Moreover, GenAI can be integrated into a chatbot that would answer patient’s questions in real time. The chatbot solution can be trained on custom datasets, ensuring that patients receive accurate and safe responses.

Automotive

The size of generative AI in the automotive market in 2022 has been estimated at $312.46 million and is expected to grow to $2,691.92 million by 2032.

One of the most widespread ways to apply GenAI in the automotive industry is the AI-based virtual assistant that provides drivers with needed information related to roadside services. The system ensures support tailored to the driver’s needs and location. The drivers can share information about their issue and location, while the virtual assistant leverages its data on roadside assistance services to provide an accurate output, including the contact information of a service provider.

Energy sector

Within the energy sector, generative AI can help users receive practical tips on energy consumption and saving. An assistant based on GenAI can effectively respond to user queries, providing data on their consumption patterns, carbon footprint reduction tips, and even advice on energy cost optimization.

Insurance

Another generative AI example is an insurance company Helvetia, based in Swiss, a pioneer in deploying generative AI to develop a customer engagement service. Their AI-driven solution is specifically designed to address customer inquiries concerning insurance. Helvetia named their digital assistant Clara. Now, the technology is undergoing testing since the company plans to integrate ChatGPT and its insurance data. Clara leverages data from Helvetia's website. Receiving a customer request, the chatbot finds the needed information on the website and responds to the user. This approach allows users to avoid independent search for information.

Retail and eCommerce

In the same way as Spotify applies generative AI to create personalized playlists for their users, the retail and eCommerce industry can leverage GenAI to create custom product recommendations. Taking into account users’ purchase history, a GenAI assistant will provide unique suggestions, increasing customer satisfaction rates. Retail and eCommerce businesses can also use GenAI to generate product descriptions, designs, and realistic images.

Finance

Generative AI is used to develop banking chatbots to deliver human-like responses that are more authentic. As a result, customers gain a personalized experience that increases their satisfaction with the bank's services. Moreover, AI-empowered chatbots can engage with customers using different languages, which enables banks to scale by engaging a global audience. Within the financial sector, AI chatbots can offer proper services to customers based on their preferences.

These generative AI examples aim to showcase how businesses grasp the opportunity to automate their processes and increase productivity. No matter what industry you want to conquer, generative AI is a must-have technology for this journey.

Best generative AI tools for businesses

ChatGPT

OpenAI’s creation, ChatGPT, is an advanced generative AI tool that runs on large language models (LLMs) for seamless natural language processing. The latest version, GPT-4, is a transformer-based model retrained on 175 billion parameters. ChatGPT finds diverse applications across multiple domains, serving for email marketing campaigns, content creation, translation, and more. Its proficiency in text generation mirrors human conversations, making it a valuable asset across various industries.

DALL-E

DALL-E, an innovative generative AI technology, specializes in generating images based on user’s descriptions. DALL-E can develop anthropomorphic images of different objects by merging incompatible concepts in a believable manner. Its utility spans across diverse applications, making notable contributions to design, content creation, and educational initiatives.

Midjourney

Another generative AI tool, Midjourney, was designed for cutting-edge digital art creation. The core of Midjourney architecture isn’t revealed to the public. However, engineers suggest that it is based on GANs and VAEs models. Art generated by Midjourney is even shown at digital art exhibitions.

How we can help with GenAI implementation

Generative AI is slowly becoming our day-to-day assistant, be it in the agriculture, automotive, or healthcare industry. Even though this technology is young and requires improvements, data science engineers can successfully integrate it into routine tasks. For instance, our data science team created several solutions using generative AI: smart search, email classification, FAQ chatbot, and classification of queries into classes and subclasses. These projects help prioritize tasks, improve customer satisfaction with a service, and increase productivity within different company departments.

If you need a generative AI solution to boost your business growth and automate routine tasks, schedule a call and tell us about your needs.

FAQ

What’s the difference between machine learning, deep learning, and generative AI?

Machine learning (ML) is a branch of computer science related to computers learning to discern patterns from sample data. Within the ML branch, deep learning emerges as a specific technique involving neural networks that employ nodes, similar to the structure of brain neurons, serving as processing units working with inputs and outputs. For instance, a deep learning model can identify an object in the image, while a generative AI model can create an image with a needed object.

In the training phase for LLMs, how do you manage risk responsibly?

A recommended approach is to meticulously filter training data to eliminate any undesired content or personal information before training the model. This proactive measure significantly reduces the risk of the model generating unacceptable content. Additionally, our data scientists use classifiers and fine-tuning to train the model against generating harmful responses.