Motion gesture detection

The challenge

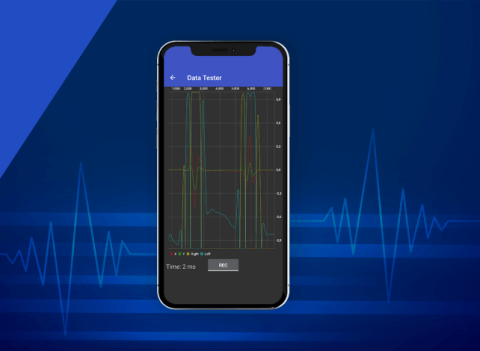

We aimed to design a neural network scheme that is capable of recognizing desired gestures, like quick motions to the left and right.

In order to do that, we had to pass through all steps of implementing motion gesture recognition on an Android application using the TensorFlow library. This included capturing and preprocessing training data, designing and training a neural network, and developing a test application and ready-to-use Android library.

Delivered value

We got the library that can be integrated into any other Android application to boost it with motion gestures.

The process

The idea appeared when our engineer had accomplished the first part of the "Self-Driving Car Engineer Nanodegree Program" Udacity course that partially covered machine learning.

The process included several stages: data collection, designing a neural network scheme and training, implementation of a demo application and an Android library ready to include in another projects.

You can find the full process description in the article and video demonstration on our blog.

How it works