You are a business that wants to develop an embedded AI solution. Suppose you need a sound recognition application with a 10K device fleet. Continuous sound streaming generates about 200TB of data per month. To detect the sound in real time, your inference has to run 24/7. Let’s apply that to some calculations.

Going with the cloud, you can start slow, but as you scale, only your monthly bandwidth, inference, and storage costs will easily reach $70K. Edge architecture can significantly cut such ongoing expenses — to as low as $5K. But you will face a major up-front investment in hardware ($150–$300 per device) and continuously high bills ($25–35K/month) for maintenance. So what aspects to count in when investing in AI architecture and how not to miss the mark with getting a positive ROI?

In this article, we provide a multi-factor, experience-backed edge AI vs cloud AI comparison that will help you make a strategically sound business decision.

What is the difference between edge AI and cloud AI?

The key distinction between the edge and the cloud lies in where data is processed.

In the context of AI development, data processing happens at two separate stages: during model training and at inference. In this article, we will be mostly discussing the inference stage, where the actual intelligence takes place as the application analyzes real-world data under real-world conditions and executes specific commands.

When we speak of edge AI development, we mean data analysis close to the data source, on the network edge, our experts outline. This can be directly on a device like a smart camera, or any local hardware like a computer in a vehicle, or an industrial gateway within a manufacturing facility. Meanwhile, cloud AI implies an app sending data for processing from a device where it was collected to a remotely connected, centralized server.

We summarized the key edge AI vs could AI differences in the table below:

| Edge AI | Cloud AI | |

|---|---|---|

| Where data is processed | Device controller, device computer, local server, internal gateway | Remote public or private server |

| Computing power | Limited | Massive |

| Response latency | Real-time | With delays |

| Data privacy | Device-level security | Secure transit needed |

| Data infrastructure needs | Collection, preprocessing, inference, output, temporary storage | Collection, filtering, transit, preprocessing, inference, output, storage |

| Connectivity needs | None, intermittent, short to mid-range | Continuous, regular, long-range |

| ML model requirements | Hardware-optimized, specialized, lightweight (in some cases) | No technical limits |

| Scalability and maintenance | Distributed, harder to manage | Centralized, easier to manage |

| Key costs | AI-capable hardware, device lifecycle management | Data transfer, bandwidth, compute, DevOps |

In practice, both architectural approaches require certain trade-offs, depending on the tasks you want to solve or restrictions to deal with.

For instance, edge AI solutions will be more efficient in time-bound applications or restricted-access environments, but need extra attention to hardware selection and ML model optimization. In contrast, cloud AI applications pose no technical limits on computing power and model size, but make you consider data transfer infrastructure, speed, and costs.

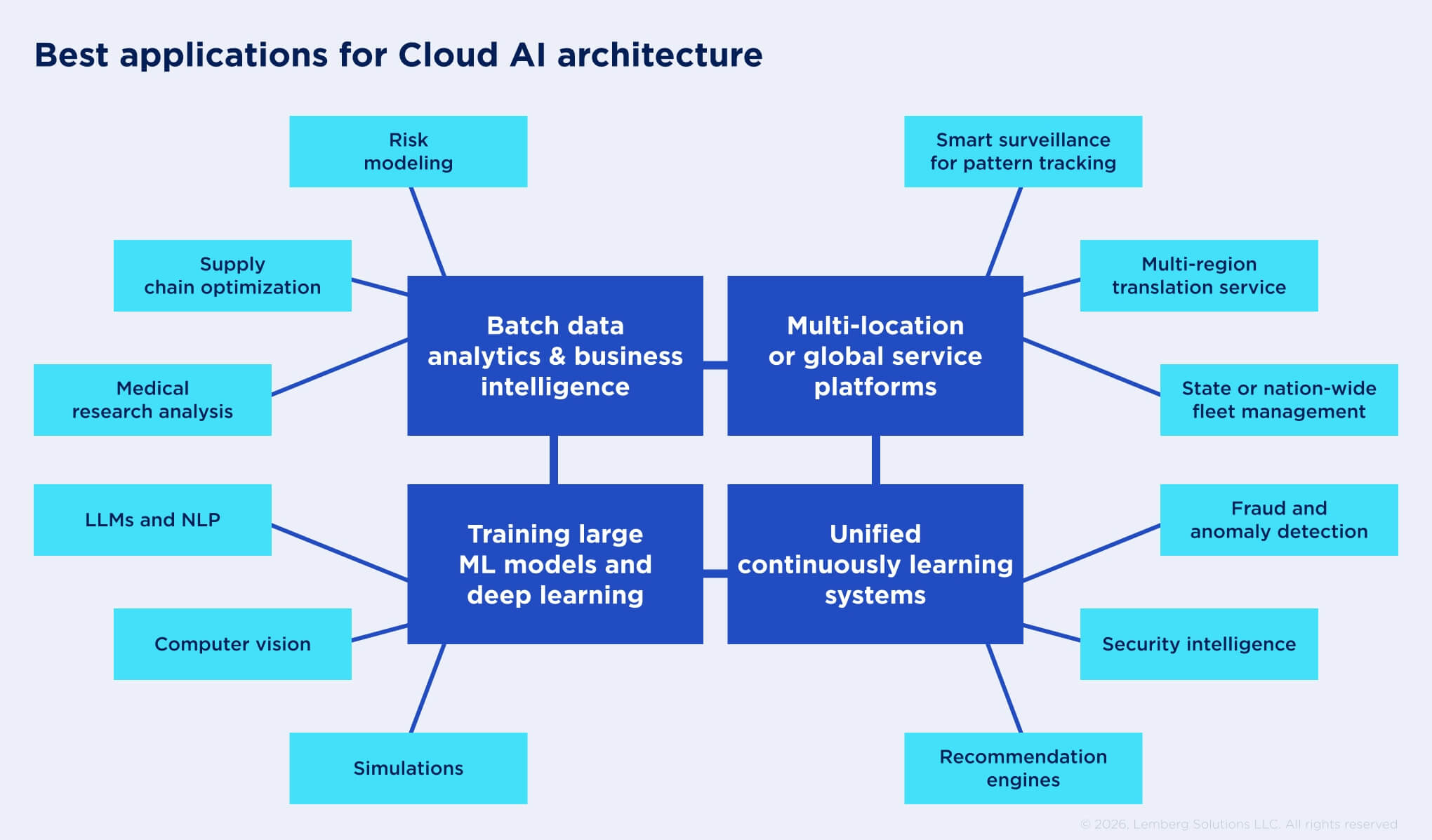

What is cloud AI used for?

The cloud provides technically unlimited computing power and data storage capacity needed to train and deploy any ML model. Our cloud development specialists explain that this allows developers to run deep learning neural networks or large ML models and use big data for large-scale data analytics and business intelligence.

Since all you need to access the cloud is internet connectivity, applications based on cloud AI can be accessible to users anywhere and anytime, they comment.

Thus, the centralized nature of the cloud enables SaaS products, which often require a shared, easy-to-scale infrastructure, global coordination, and multi-region coverage.

One more great feature of the cloud is high platform resilience. Cloud providers constantly take care of data redundancy, replication, and backups, which protects applications from unintentional data loss. In the context of edge AI vs cloud AI comparison, this makes the cloud a better technology foundation for business-critical AI solutions. For instance, financial or business operations support systems are heavily reliant on data integrity and accessibility that the cloud can provide.

When to choose cloud AI?

Considering such features as on-demand access, scalability, high performance, parallel processing, and high failure tolerance, you can rely on cloud AI architecture when you need:

Massive computing power (for both ML model training and inference)

- Large data storage

- Batch data processing

- Data centralization

- Shared access to data and infrastructure

- Highly reliable data and system backup

Cloud in crop analysis: a data aggregation case

Cloud AI architecture works great when the system depends on complex models, centralized learning from large datasets, and frequent updates. For example, a mobile app for crop analysis that relies on cloud-based computer vision. Here, the phone acts only as a data capture interface, while the heavy AI processing runs in the cloud.

In order to adapt the ML model to various grain types and nutrient applications, the client needed frequent ML model updates. In this case, cloud-level data aggregation capacity enabled a continuous learning approach to maintaining the model’s adequate performance and accuracy. Under the changing soil and climate conditions and new disease patterns, centralized training helps the business optimize resource use:

- No training workload duplication or data fragmentation

- Cheaper and faster training cycles in shared environments

- Efficient cloud GPU utilization

- Simple model management and version control

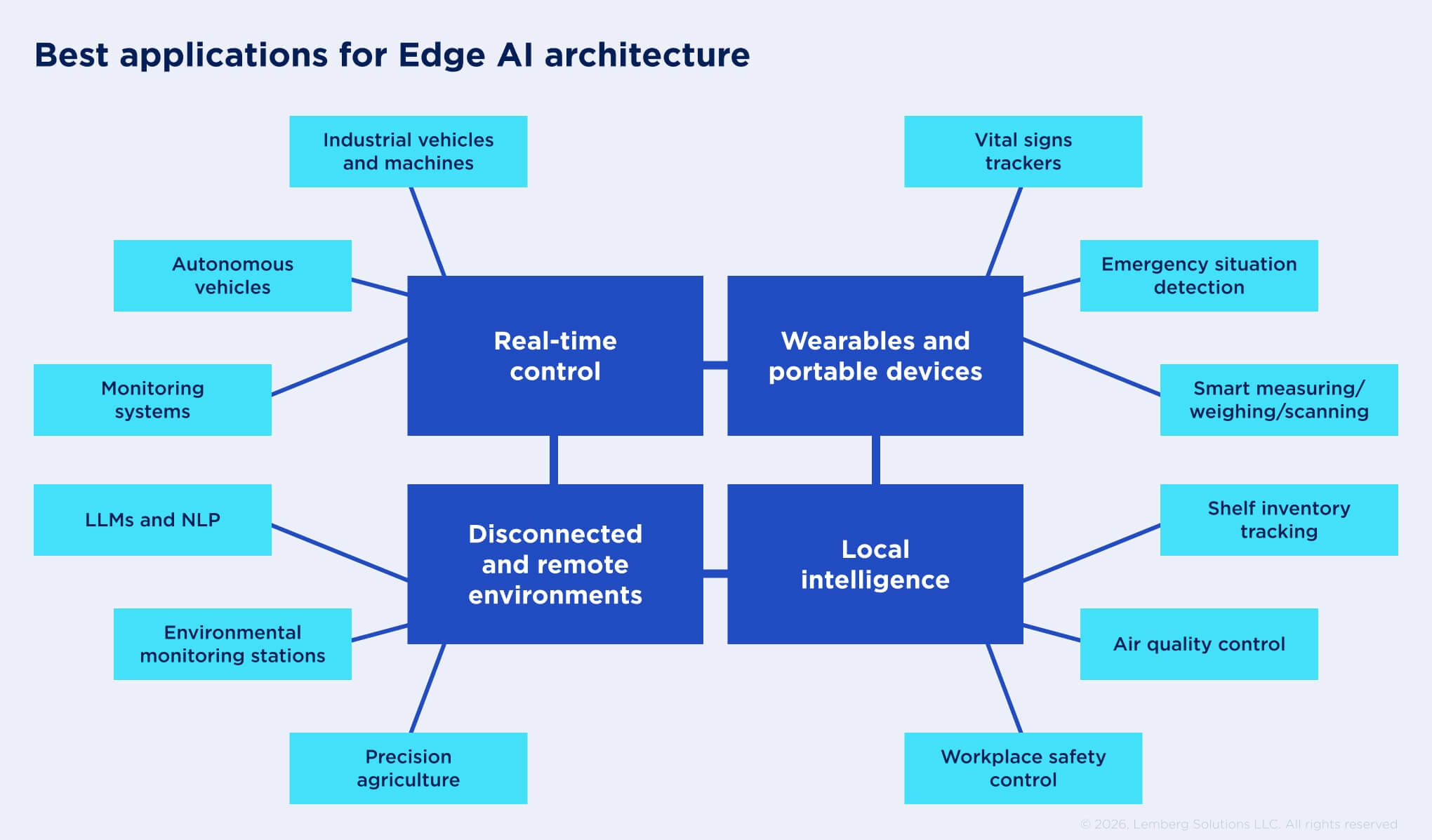

What is edge AI used for?

Edge architectures are increasingly getting traction as AI penetrates every sphere of life.

The primary focus of edge AI is low-latency, real-time system response enabled by direct, on-device data processing that happens within milliseconds. It is possible due to specialized, hardware-optimized ML models deployed onto processors and controllers that can handle ML workloads without delay. This is why edge AI is widely used in time-sensitive applications: autonomous vehicles, smart robotic assistants, or safety control systems in industrial and warehouse environments.

In addition to real-time or near-real-time data analytics, edge AI architecture enhances data security. The data gets processed close to its source, eliminating the risk of exposure during transfer. This is often a decisive factor for embedded systems in highly regulated industries or critical infrastructure, such as energy, automotive, or defence tech, subject to rigid data sovereignty requirements. Edge AI also effectively addresses user data privacy, a major concern for customer-facing devices.

Another common use case for edge AI solutions is offline machine learning in remote or disconnected environments. All required data analytics happens on the device level, so edge AI systems can continue generating business value even without a stable or constant internet connection. And this is a significant factor in the edge AI vs cloud AI processing comparison. Some common examples include fault detection or autonomous inspection robots in remote manufacturing sites.

When to choose Edge AI?

Summing up, Edge AI enables real-time data processing, portability, and autonomous functioning for intelligent devices. Such features are typically required when:

- A system interacts with the environment in real time

- System response time is safety-critical

- Connectivity is unavailable or unreliable

- Reduced bandwidth usage is needed

- Operation in regulated environments requires extra data security

- Device ergonomics is as important as quick response

Time and resource efficiency with edge AI

One of our most interesting embedded AI projects, where we implemented edge AI architecture, was a computer vision livestock weighing device for a farming company. Here, we used a local server to run the custom animal weight recognition algorithm. As a result, farm workers can detect the pig breed and estimate its weight from an image with an 8-10 second latency and a 98% accuracy by just scanning an animal with a hand-held device.

In this solution, edge AI architecture ensures quick visual data collection and analysis. It sped up the routine weighing process by 24 times, providing a significant operational boost for the staff. The weighing device is also autonomous and portable, so it can be easily used across the farming facility.

For another of our clients, we worked on an AI-powered ultrasonic device for predictive maintenance on rolling bearings for industrial machines. In this case, edge ML deployment enabled direct integration with the machine controllers, shutdown triggers, and alarms, providing a timely system response before any damage could be made. Also, raw data streams from ultrasonic sensors are extremely large and would require significant bandwidth for data streaming to the cloud.

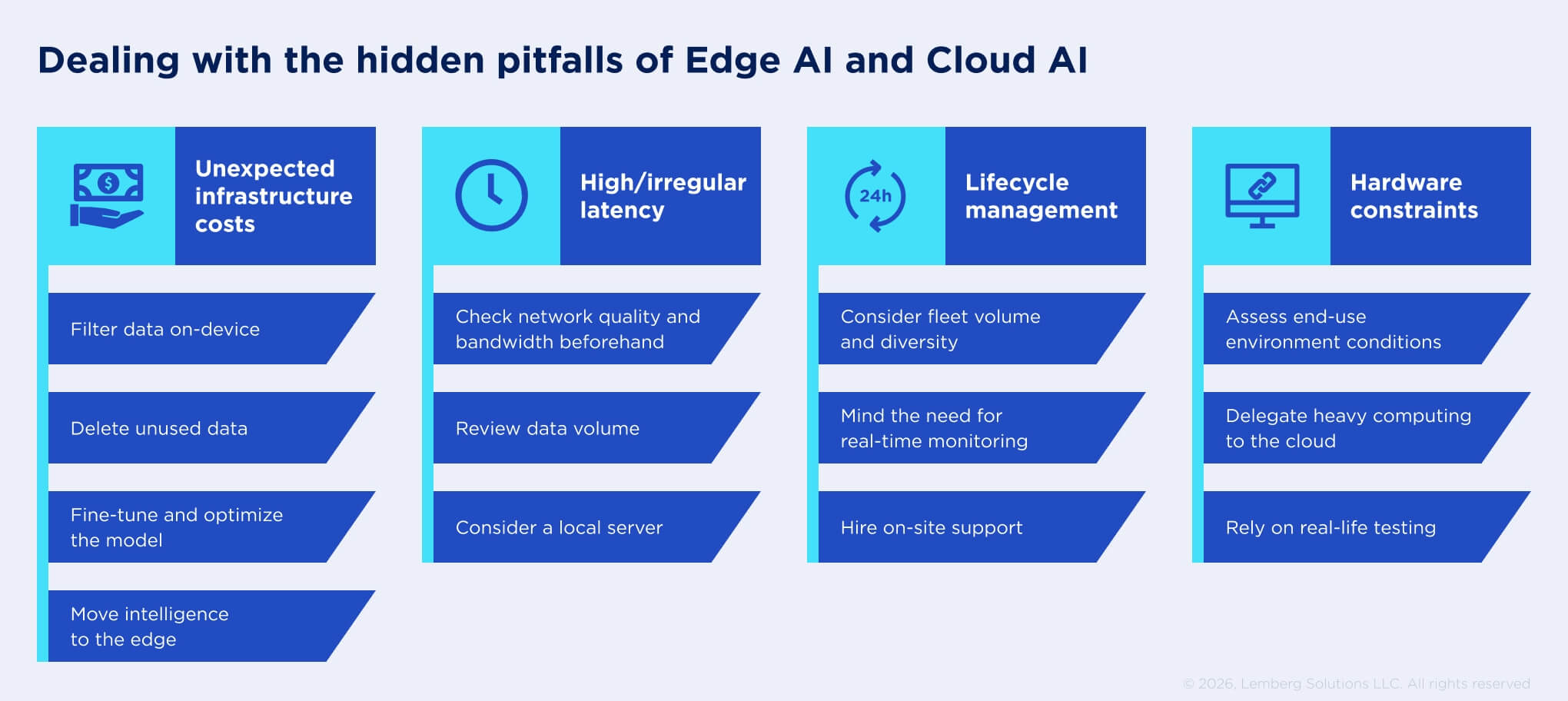

What businesses overlook in edge and cloud at the design stage

The above sections cover common architectural choices for AI applications based on the most obvious business requirements. But quite often, as the development process evolves, there might emerge secondary constraints that will make you reconsider initial decisions and even cause losses. And our edge AI vs cloud AI performance comparison wouldn’t be complete without considering these impact factors.

Network costs and bandwidth limits

As we mentioned earlier, cloud resources are unlimited, but only technically. When it comes to fees for actual resource usage in cloud AI, teams start surging cost cuts. For example, you don’t need real-time data processing, so you opted for the cloud. But your devices generate continuous data streams that lead to network congestion and bandwidth costs so high you could cover installing own edge GPUs.

Apart from bandwidth, unexpected costs may also emerge due to poorly managed cloud data egress, raw data transfers, unused data retention, and even an ML model not being optimized for your actual needs. To avoid such accidents, we recommend taking into account:

- What kind of raw data your device will be collecting (image, video, or audio) and how “heavy” they are.

- Which parts of the collected data should be analyzed by the AI? You may consider data segmentation and filtering on the device to reduce the traffic volume.

- Which parts of the data should be stored and for how long?

- Which specific AI tasks should the ML model solve? With some model optimizations, you can avoid redundancy and save on computing power needs.

Compare how costs accumulate in cloud AI and edge AI infrastructures for data-heavy tasks:

| Cost factor | Cloud AI | Edge AI |

|---|---|---|

| Bandwidth | Large data transfers | Minimal use, can be neglected |

| Inference | Continuous workloads = growing fees | High upfront investment, but no ongoing inference fees |

| Data storage | Scalable but accumulating | Limited but controllable |

No need in real-time data processing isn’t necessarily a decisive factor in favor of cloud AI. Your data processing needs and the cloud infrastructure required to manage them can end up being a money drain. Our architecture designers recommend considering the data volume factor and estimating potential resource expenses before making the choice:

Moving AI closer to your data, not data to AI, can be more cost-efficient in the end of the day. Modern edge video analytics capabilities are already influencing infrastructure decisions.

Latency expectations

No need for low latency doesn’t equal no need for predictable latency. Although cloud AI architecture provides high resource and data resilience with timely updates, backups, and overall business continuity assurance, it still doesn’t guarantee all-time access. And without on-demand data and resource availability, cloud AI solutions cannot be as efficient.

Your device’s connection with the cloud can be impacted by network instability, slow speeds, or insufficient bandwidth, all leading to unacceptable latency levels or even complete data packet loss. We’d say this is one of the most overlooked edge AI vs cloud AI differences, as it can make your automation loops unstable, users dissatisfied, and ROI negative. What to do?

Before making your choice:

- Consider how your data volume and streams can affect transfer speed.

- Check if your network provider and cloud plan can ensure the necessary bandwidth.

- Inspect on-site connectivity quality at the location intended for device installation. Remote destinations like factories, farms, and powerhouses can be particularly tricky in this respect.

Here’s how unexpected latency or connectivity interruptions can urge the need for additional costs in the cloud AI vs edge AI environments:

| Cost driver | Cloud AI | Edge AI |

|---|---|---|

| Lost business value | Latency increase → service interruptions | Autonomous operation = consistent performance |

| Back-up mechanisms | Infrastructure duplication | Device duplication |

| Data backlog and recovery | Bandwidth spikes, potential data loss | Processed immediately, minimal backlog needed |

Cloud availability is tightly associated with network characteristics, while high-quality network connectivity is far from ubiquitous. Taking the time to find out connectivity options you can count on will be a wiser investment than planning extra sprints (and costs) for reengineering or issue fixing.

Device lifecycle management

Support and maintenance don’t seem to be a challenge until you have to manage thousands of devices. Especially when they are remote and disconnected, or there are different models to support. You need a way to monitor device performance, prevent model data and concept drifts, deliver updates, and install security patches.

In contrast to centralized cloud infrastructure, edge AI has a distributed nature. At scale, this introduces operational overhead and infrastructure management complexity. Moreover, model and system updates can become inconsistent across different deployment locations or device models. To understand if edge AI system maintenance will be a doable task or turn into an unmanageable nightmare, it’s important to answer several questions:

- How many devices and locations will we need to support? Are they the same?

- Will we need to update models often? How critical are timely, well-orchestrated updates?

- How close and regular should device monitoring be?

- How many resources and costs are we ready to allocate to system support?

Here’s how infrastructure management costs compare for cloud AI and edge AI:

| Cost factor | Cloud AI | Edge AI |

|---|---|---|

| Model deployment and provisioning | Centralized rollout and API integration — low cost per every new device | On-site installation, configuration, testing are costly |

| Updates and patching | Update made once — applied to all users, minimal effort | Per-device OTA, version control, rollback drive operational overhead |

| Monitoring and maintenance | Remote monitoring, management, and failure handling done centrally | Need resources for field service, spare hardware, downtimes |

In the edge AI vs cloud AI comparison, centralized cloud infrastructure is more cost-efficient and reliable when it comes to a large, very distributed, and mission-critical device fleet. But if your device count is relatively small and can do without frequent model updates, edge AI might be a reasonable choice, too. Also, this architecture approach is more viable for devices that need adjustments to the on-site environment and dedicated support staff.

Unforeseen hardware constraints

Edge AI devices can be used in a great variety of environments with conditions ranging from extreme temperatures to rigid size and weight limits. These conditions constrain not just the hardware that can run an AI model, but also other system components responsible for data collection, power supply, storage, and memory.

To fit the functional and environmental requirements, engineers must strictly prioritize the importance of certain elements and leave out others, which eventually impacts the architecture choices. For example, a smart device should be wearable and detect critical health conditions. In this case, edge architecture with optimized real-time vitals processing will be more relevant than cloud with more advanced analytics but high latency.

But some decisions aren’t as obvious. Take, for instance, predictive maintenance cameras for manufacturing. Since video data is high in volume and expensive to transfer, it looks logical to implement edge AI and run the computer vision model directly on the cameras. But testing in real-world conditions reveals that the camera hardware starts overheating due to poor facility ventilation, which impacts the GPU performance. As a solution, we can move predictive analytics to the cloud, as it doesn’t require low latency. To deal with the bandwidth cost issue, we can leave only lightweight video preprocessing on the edge.

Therefore, hardware constraints bring about some significant variations in costs between cloud AI and edge AI infrastructures:

| Cost factor | Cloud AI | Edge AI |

|---|---|---|

| Compute capacity | Unlimited compute on demand, but needs cost monitoring | High-performance ML requires expensive embedded accelerators |

| Power and thermal limits | Irrelevant, infrastructure handled by a provider | Significant power and heat generation drives the need for cooling or low-power hardware |

| Physical limits | Irrelevant, infrastructure handled by a provider | Costs for custom on-site mounting, cabling, ventilation, power supply |

Not all infrastructure choices between edge AI and cloud AI can rely solely on technical hardware characteristics. Some also require hands-on domain experience that helps account for side factors long before the real-world testing stage.

Summing up this section, it’s important to account for edge cases and limitations that are hard to foresee. Their prediction is highly dependent on a unique mix of conditions, including the core function, users, scale, tech stack, and budget. You can leverage the vast experience of embedded development consultants at Lemberg Solutions to assess the architecture needs for your solution with more accuracy on a case-by-case basis.

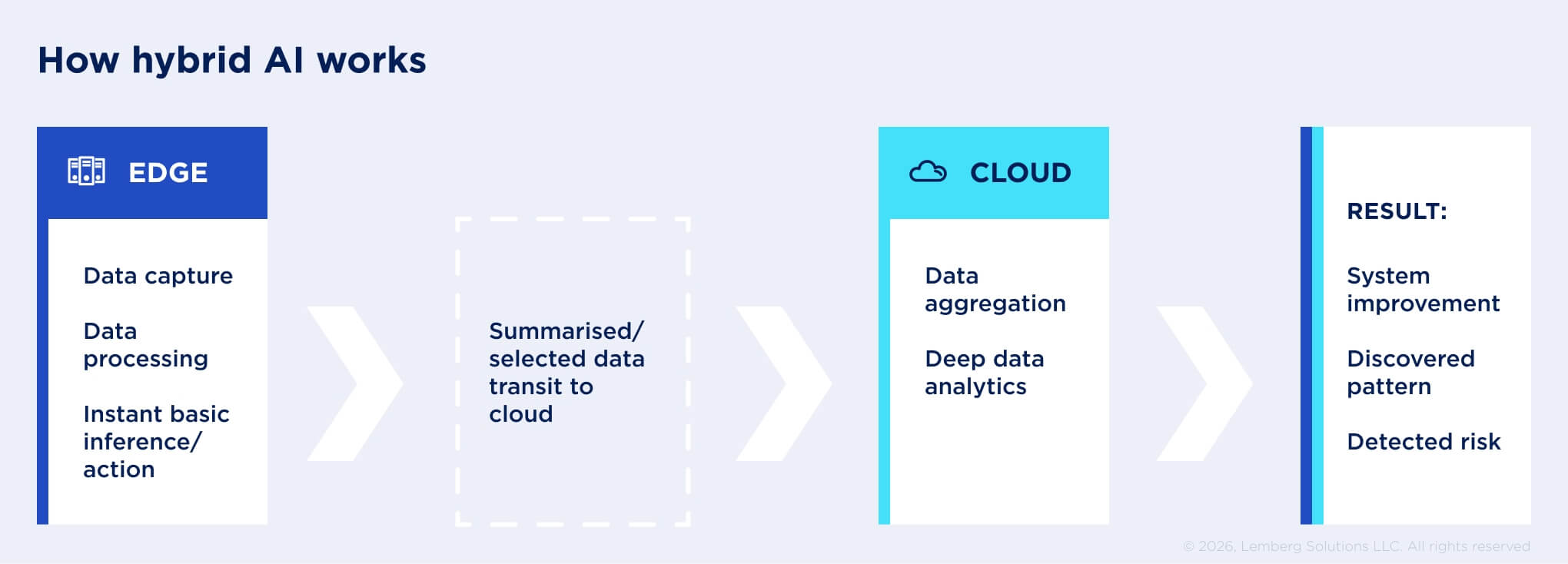

The hybrid approach: combining edge AI and cloud AI

Given the fact that using exclusively edge AI or cloud AI architecture poses some strict limitations, most modern embedded AI systems leverage the combination of both — a hybrid architecture. In this scenario, the edge is responsible for data collection, preprocessing, real-time inference, and autonomous operation. Meanwhile, the cloud handles model training, task coordination, deep analytics, system updates, and scaling.

Within the hybrid architecture, the edge artificial intelligence system operates close to where data is generated: devices, machines, vehicles, or even directly on sensors. Also, it is often designed to function without a continuous power supply and connectivity. This defines the range of its typical tasks:

- Basic, specialized real-time inference (sound recognition, anomaly detection, object classification)

- Local decision-making and control (move the camera to follow a detected object)

- Filtering or compressing raw data before transferring it to the cloud (extracting fragments with a detected object from a video stream)

- Operating autonomously during network and power outages (due to battery, temporary data store, on-device inference)

- Enforcing privacy (face blurring on images from surveillance)

The cloud, as a more powerful part of the hybrid system, provides deep analytics, big data and long-term storage, and process orchestration. It cannot eliminate latency, so it handles non-time-sensitive but resource-consuming tasks:

- Training and retraining AI models (as part of both model design and maintenance)

- Aggregating data from many edge locations (resulting in deeper analytics like trend detection, predictions, recommendations)

- Long-term data storage and analytics (for business intelligence, operational needs)

- Fleet management of devices (device monitoring, model and software updates, centralized scaling)

Use case: optimizing the data pipeline with hybrid AI

In our experience, we had an opportunity to work on a use case of hybrid AI infrastructure. Our client needed to effectively deploy an AI-powered telemedicine system for cough sound recognition.

The system had to support the connection between a patient at home and their doctor. It included a device or a mobile app for patients and a web platform for doctors. The device or mobile app recognizes the cough sounds from the stream of other sounds, extracts medically relevant data, and transfers it to the doctor-facing web platform. The cloud-hosted platform runs analytic and diagnostic algorithms, helping the doctor identify the patient’s health condition based on the cough sound characteristics.

In this case, a hybrid architecture was the best choice as we needed two consecutive data processing stages. First, we were able to run the sound recognition algorithm directly on the device to filter out the needed data. The filtered and compressed data are easier and cheaper to transfer to the cloud. Then, the cloud resources process and store only medically meaningful data, which ensures accurate analysis results and, in addition, saves the client’s costs for cloud storage and computing power.

Benefits of hybrid AI: cost savings and more

Hybrid AI architecture takes the best from the edge and cloud AI without unwanted trade-offs. For businesses implementing embedded AI solutions, this brings a multitude of benefits:

Cost savings. You reduce data transfer fees when preprocessing and filtering the collected data on the edge and don’t have to invest in expensive hardware for resource-intensive tasks. In the context of sound recognition for 10K devices, you can save around $25K as compared to purely cloud architecture and up to $80K (including ML-capable hardware investment) in contrast with entirely edge-based infrastructure.

Time savings. You don’t have to reengineer the entire system if you overlooked an important factor at the discovery or design stage. Just move a task from the edge to the cloud, or vice versa, within your single infrastructure. Also, as compared to an edge-only architecture, a hybrid can reduce up to 40% of the development timeline due to easier model optimization, parallel development, and reduced testing scope.

Resilience and availability combined. Your AI-enabled device remains functional on the edge, no matter the connectivity and power supply. At the same time, you don’t have to worry about all the data you store due to cloud backups and reliability mechanisms.

Simple scalability and maintenance. You can monitor all components of your distributed system in one place, provision centralized system OTA updates, patches, and model improvements, and know if and when they are delivered. This also reduces expenses on more complex lifecycle management of the full edge.

Privacy and compliance addressed. Having the choice where to process and store different types of data that your system collects and analyzes, you get more control and flexibility in meeting domain-specific data security requirements.

Why specifications account for only 20% of success?

Considered separately, both cloud AI and edge AI architectures are not ideal: each requires significant investment to function and generate value, be it up-front or ongoing expenses. Thus there are much fewer edge AI vs cloud AI differences in costs than it seems at first glance. However, the combination of cloud and edge in the right proportions yields economically feasible results.

Deep professional expertise and domain experience keep playing a key role in designing functional and ROI-driving solutions, especially for distributed and field usage. Often, a lack of hands-on experience and widely spread biases about edge or cloud AI can bring financial and operational surprises:

| Presumption | Consequence |

|---|---|

| Cloud infrastructure requires lower investment than AI-capable edge hardware. | More expenses long-term |

| Network bandwidth has no limits, and I can transfer as much data as I need. | Skyrocketing cloud fees |

| Modern internet networks guarantee predictable latency. | Service interruptions dump operational ROI |

| Hardware datasheets account for edge cases and extreme environmental conditions. | Wasted investment, additional costs for reengineering |

| Edge deployments don’t need frequent updates and dedicated support. | Model drift, poor performance |

| Data stays on-device, so security is not a concern. | Legal liabilities, data leakage |

This is why, when designing system architecture, it might seem that technical specs like latency, compute, bandwidth, or model size determine whether your system will work. But the truth is, they describe a hypothetical and isolated system, our experts conclude. When working with clients, we see that 80% of the success usually depends on unpredictable factors, such as unstable networks, environmental constraints, or human workflows.

While available sources can give broad answers, they often lack the nuance of real-world system performance. And generalized information isn’t enough to find out your processor compatibility for an ML model or bandwidth needs for real-time data streaming. At Lemberg Solutions, our embedded development engineers provide consultancy backed by hands-on project experience, offering the technical precision your business requires.